by rainchu | 9 月 23, 2024 | SERVER

在 ubuntu linux 中預設是用 apache2 作為 http server,但現在比較多機會是用 nginx 或是自己建的 http server ,所以在這邊記錄下我的移除方法

移除指令

sudo apt-get remove --purge apache*

執行指令後,注意看一下有沒有可能會誤刪的,沒有的話,接下去執行

執行完畢後,apache2 就會被刪除,但內容以及設定檔都還會存在

移除相關設定和內容

設定預設是存放在 /etc/apache2 這個資料夾

內容預設是放在 /var/www 下,記得要檢查備份後,刪除

by rainchu | 9 月 23, 2024 | Docker, MIS

常常會在建立docker時候忘了填寫容器的名稱,以前只會砍掉重建,其實還是可以試試看 docker rename

建 docker 的時候給名稱

docker run --name [新容器名稱]

在原有的docker上面改名稱

docker rename [現有容器名稱或ID] [新容器名稱]

參考資料

by rainchu | 9 月 23, 2024 | AI, Chat

公開如何使用 OpenAI 配合 LiveKit 來實現會多國語言的小姐姐,可以即時回答您的問題,這個跟 Twilio 一樣的簡單和易用

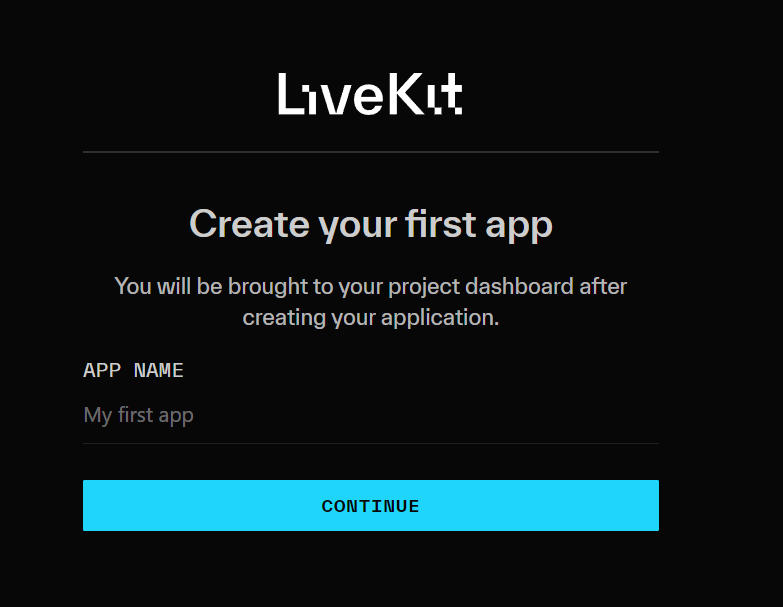

取得 LiveKit key

利用 google 帳號登入 LiveKit Login 命名一個 project

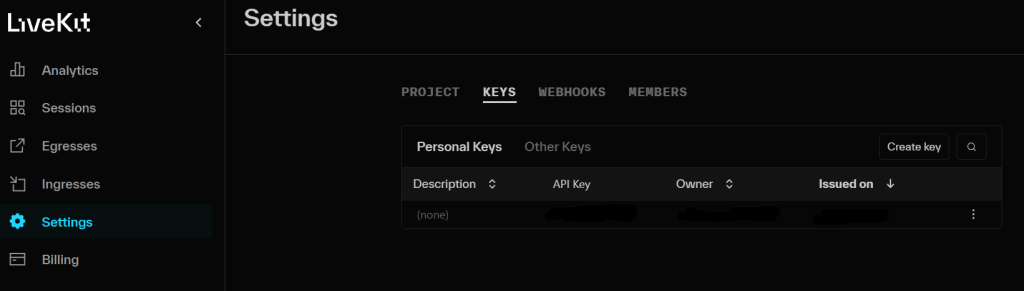

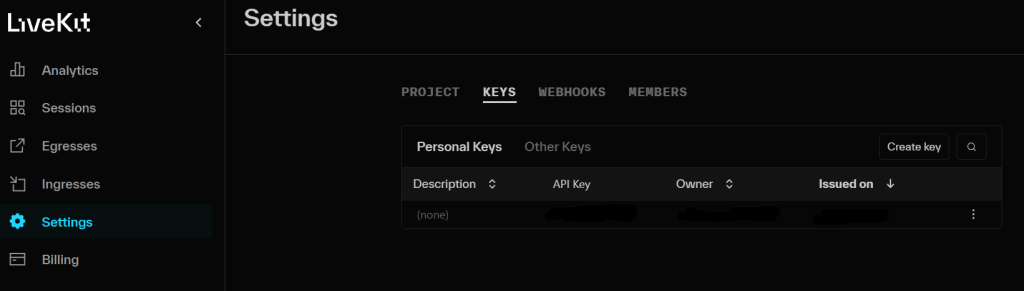

並且到專案中的 settings -> KEYS ,取得 API KEY

程式碼

首先安裝相關依賴

pip install livekit-agents livekit-plugins-openai livekit-plugins-silero python-dotenv

設定環境變數

LIVEKIT_URL=""

LIVEKIT_API_KEY=""

LIVEKIT_API_SECRET=""

OPENAI_API_KEY=""

主要程式碼

import asyncio

from dotenv import load_dotenv

from livekit.agents import AutoSubscribe, JobContext,WorkerOptions, cli, llm

from livekit.agents.voice_assistant import VoiceAssistant

from livekit.plugins import openai, silero

load_dotenv()

async def entry(ctx: JobContext):

chat_ctx = llm.ChatContext().append(

role="system",

text=("你是專業的助理,回答時候用專業的語氣回應。")

)

await ctx.connect(auto_subscribe=AutoSubscribe.AUDIO_ONLY)

asssitant = VoiceAssistant(

vad=silero.VAD.load(),

stt=openai.STT(),

tts=openai.TTS(voice="nova"),

llm=openai.LLM(model="gpt-4o-mini"),

chat_ctx=chat_ctx

)

asssitant.start(ctx.room)

await asyncio.sleep(1)

await asssitant.say("你好,第一次見面,很高興認識你",allow_interruptions=True)

if __name__ == "__main__":

cli.run_app(WorkerOptions(entrypoint_fnc=entry))測試與驗證

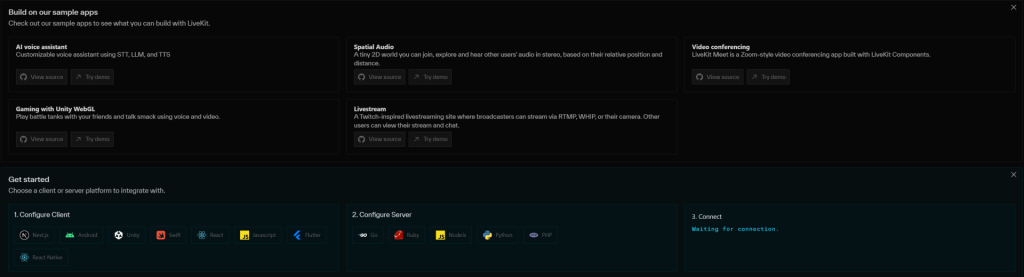

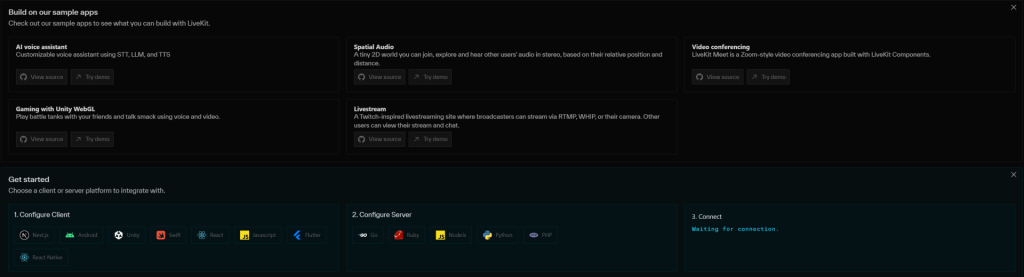

道專案中,可以看到 Get started 中有支援各種的平台的程式碼以及 server 可以使用

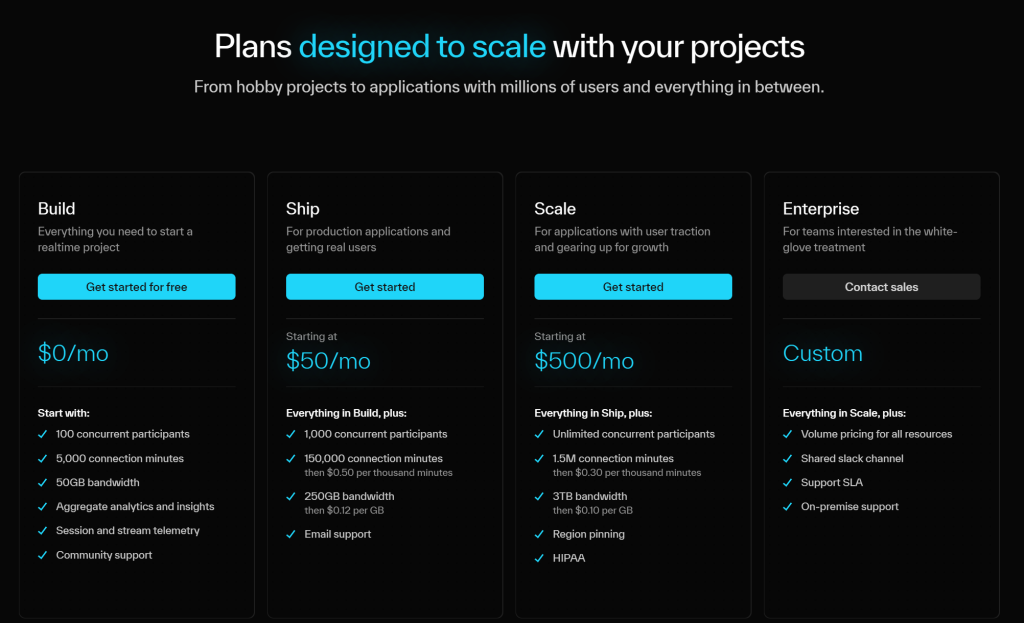

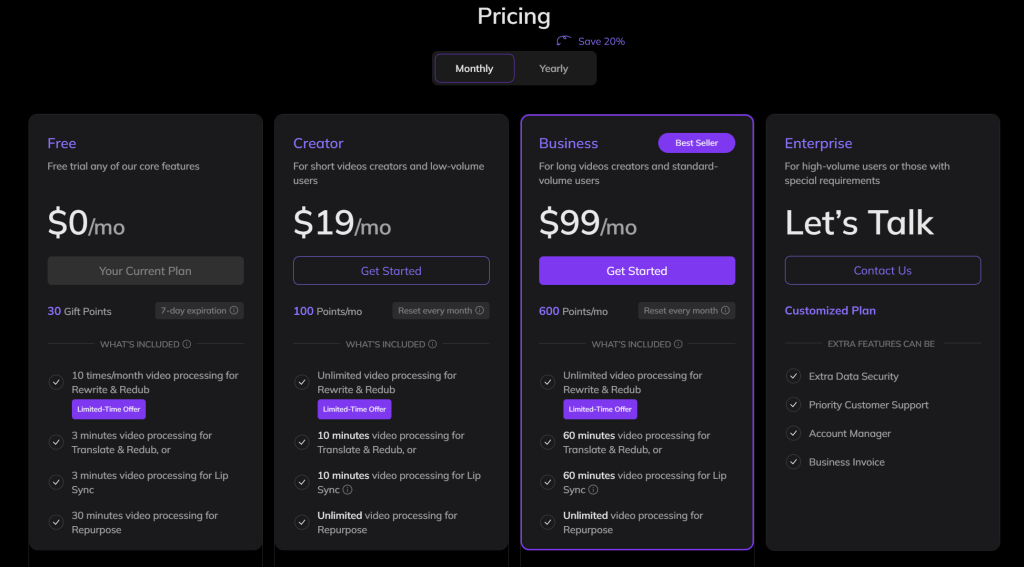

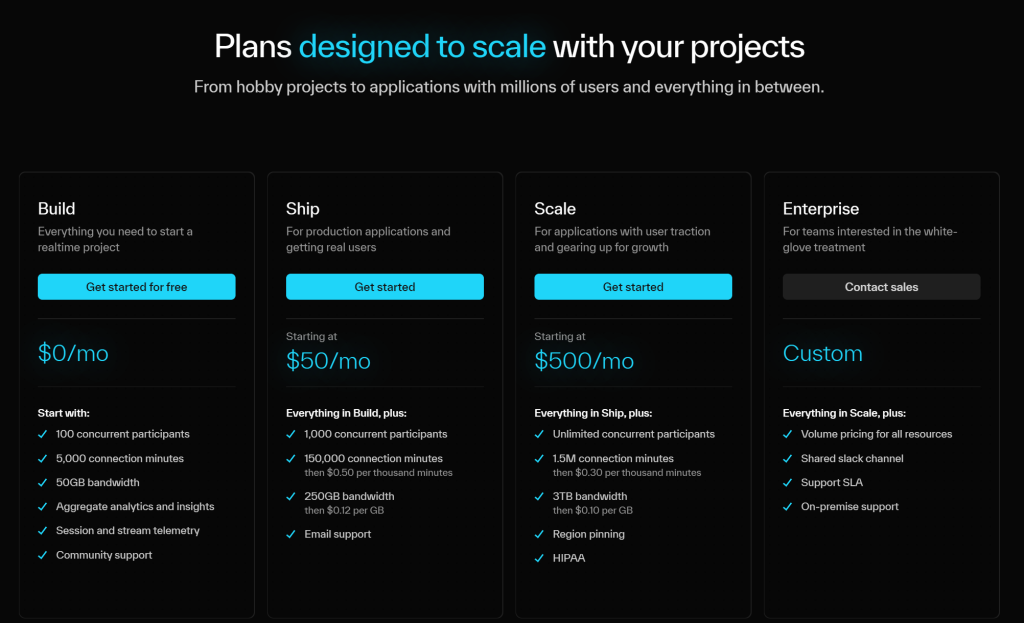

價格說明

https://livekit.io/pricing

參考資料

https://livekit.io

https://github.com/livekit/agents

demo code

by rainchu | 9 月 23, 2024 | AI, 人臉辨識, 圖型處理, 影片製作

用有多張臉,即時更換人臉的開源軟體,而且有綠色直接使用版本,已經幫忙把環境都打包好了,給懶人使用,支援windows、MAC、GPU

必要條件

Git 原始碼

https://github.com/hacksider/Deep-Live-Cam.git

下載模型

- GFPGANv1.4

- inswapper_128.onnx (Note: Use this replacement version if an issue occurs on your computer)

並且將這兩個檔案放在 models 的目錄下

安裝相關依賴

pip install -r requirements.txt

參考資料

https://github.com/hacksider/Deep-Live-Cam

by rainchu | 9 月 23, 2024 | AI, 影片製作

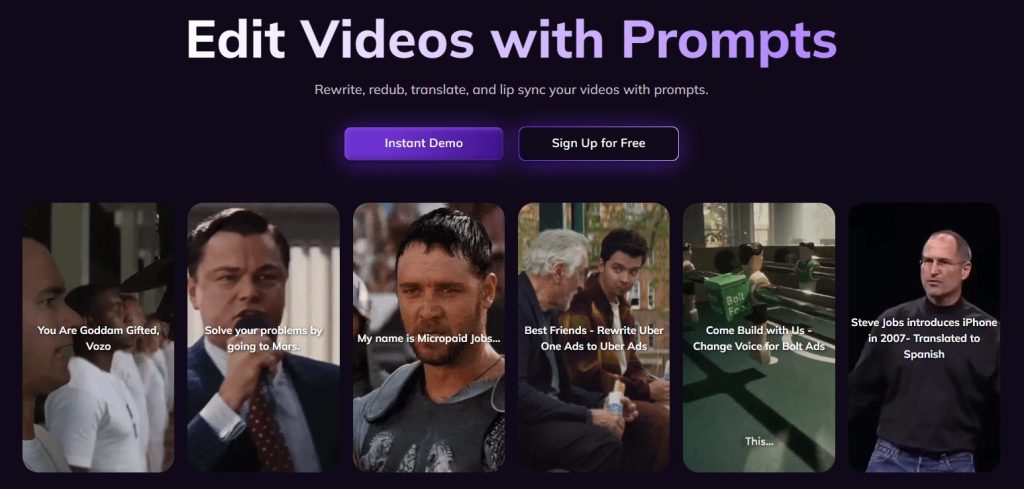

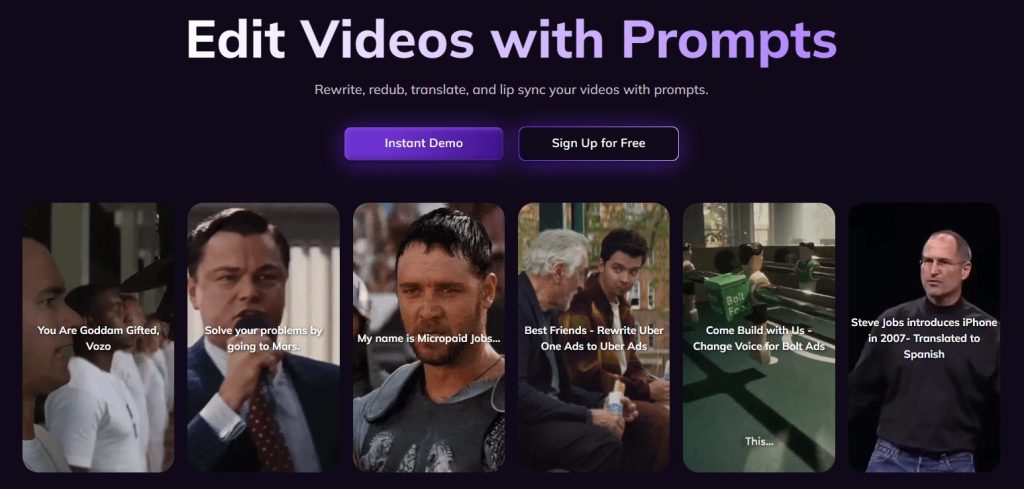

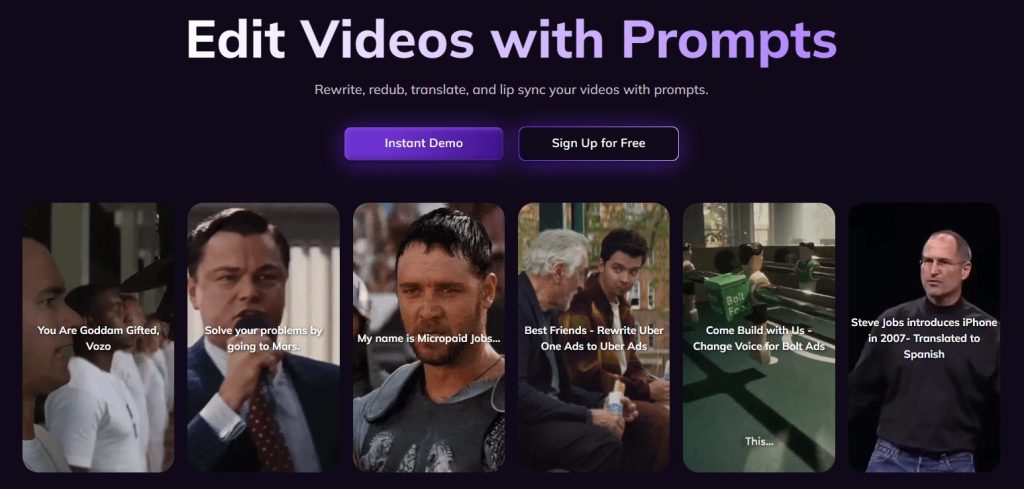

行銷人真的有越來越酷的各種AI工具,尤其在影片製作的方面,是百花齊放,各式各樣的應用一直冒出來,這個 VOZO AI 工具很適合做一些社交膜體上詪片

Rewrite 重寫功能

只要選定影片,就可以重新改變該影片的對白

Redub 重上字幕

可以快速叫出影片中的字幕,更改成自己的劇本,讓選定的影片按照你的劇本再上一次字幕,並且有翻譯功能

Repurpose 影片作成短影音

可以快速幫你把自己的影片或是youtube中的影片,快速剪輯一個短影音版本,可惜現在還是要以英文為主

支援多人口型同步

影片中有多人的話,可以支援多人的口型同步,這功能很新也很實用

自動多國語言翻譯

內建翻譯多國語言功能

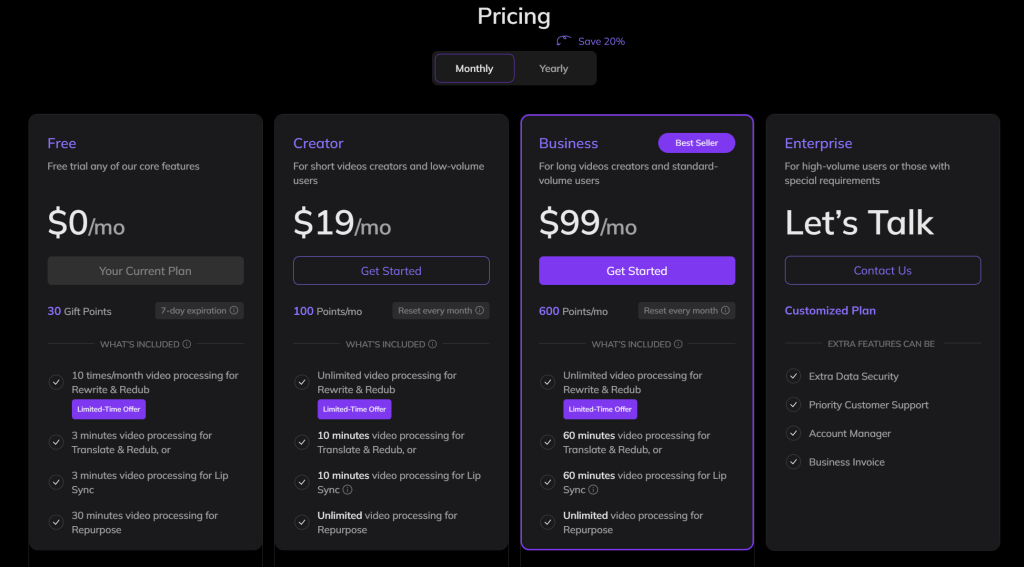

價格參考

https://www.vozo.ai/pricing

參考資料

VOZO AI

近期留言